In iOS 17 Parents Can't Delete `#images` GIF search iMessage App

Until iOS 17, parents could remove the dangerous iMessage app called #images, but the latest release removes this control, exposing millions of kids to graphic sexual content.

- We've just released a free iOS app called Gertrude Blocker which plugs this dangerous hole in Screen Time for both iOS 17 and 18. It is the only known workaround for iOS 18.

- There is a workaround for iOS 17—if you set a scheduled Downtime to be essentially always on (by starting at 3:01am and ending at 3:00am), the #images GIF search will stop working. All other apps that the parent wants to allow then must be categorized as Always Allowed, and you lose the ability to use Downtime for its intended purpose. For this reason we recommend using Gertrude Blocker instead.

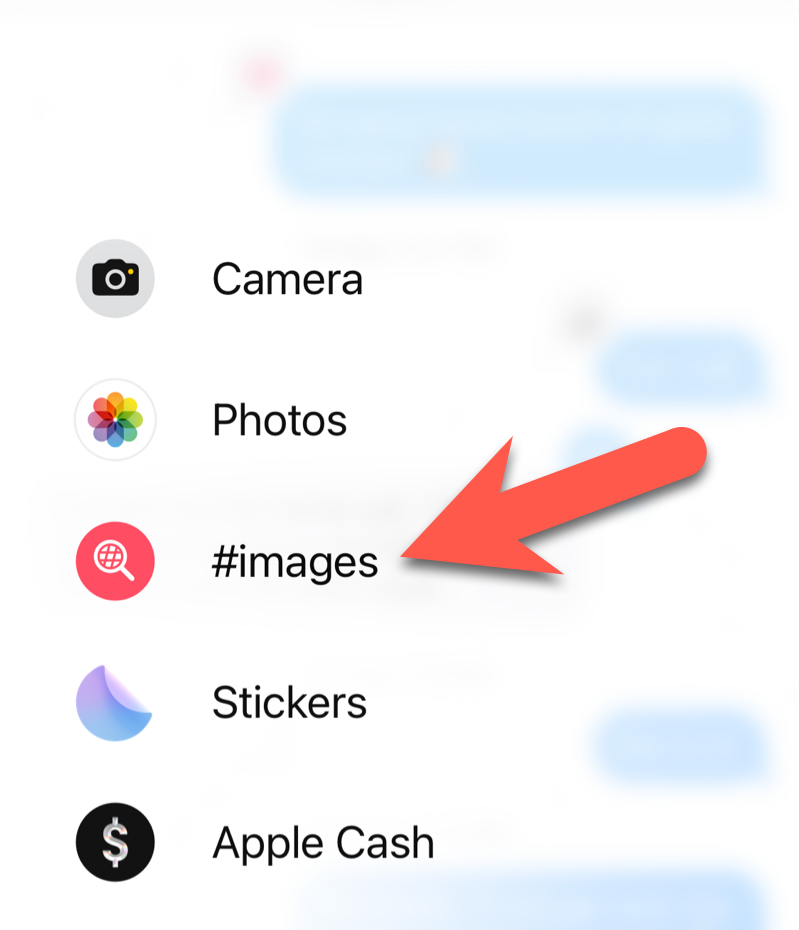

Apple's texting app "Messages" comes with a feature that allows you to search for animated GIFs to insert into texts, including many images that are extremely innappropriate for children.

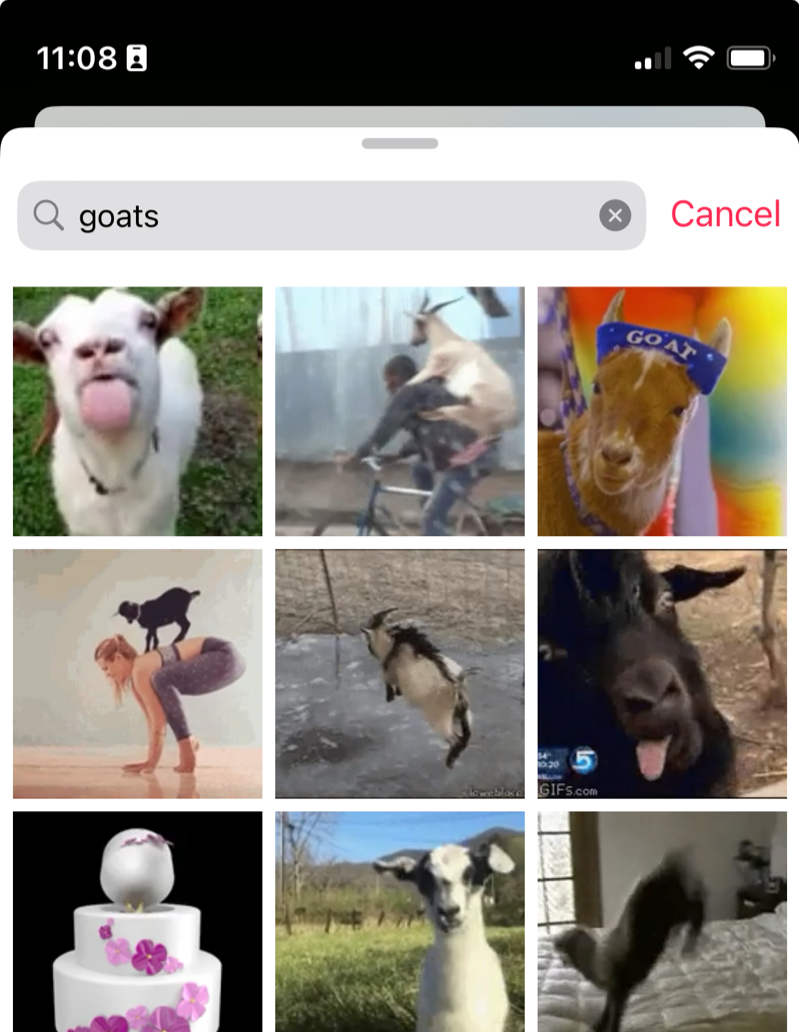

If you've never tried it, this iMessage app allows searching for animated GIFs. In the image below, you can see the result of a search for goats, but a less innocent search would fill the screen with images not suitable for children.

goats...While the #images GIF search seems to block full nudity and certain explicit searches, it still grants access to thousands of sexually graphic images that most parents would never permit young children to see. Try searching for bikini or lingerie if you're skeptical, and remember that millions of children have iPhones.

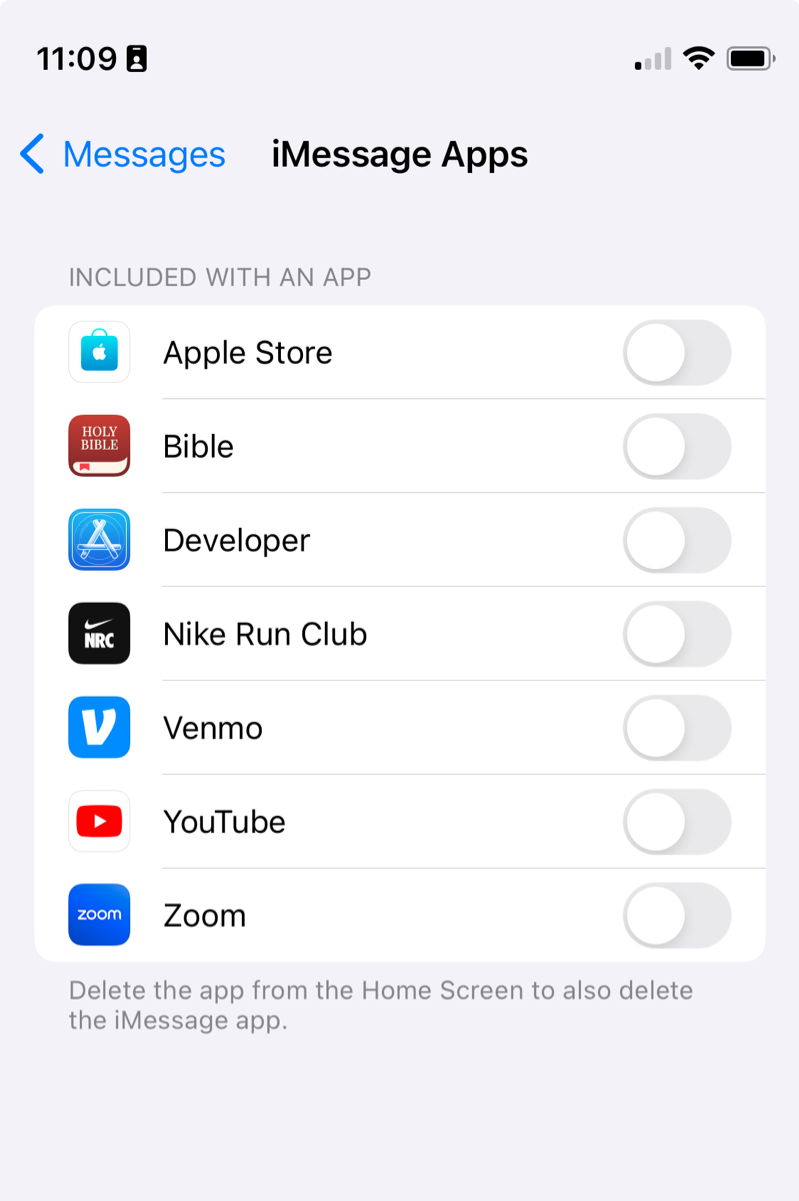

iOS 17 does allow removing third party iMessage apps through a new Settings area. But the apps provided by Apple do not appear as options to disable.

- Please take 2 minutes to file a bug report here, letting Apple know that this is a serious problem you care about. The more people who report the issue, the sooner it will get fixed.

- Help raise awareness and increase the pressure on Apple by sharing this article on social media.

- If your kids' iOS devices aren't updated to iOS 17 yet don't update, stay on iOS 16 until Apple fixes the issue. iOS 16 will work well for several years at least.

- If you've already updated, we recommend temporarily crippling the Messages app by setting it to be allowed only for 1 minute each day, with ScreenTime's App Limits feature.

The Gertrude mac app helps you protect your kids online with strict internet filtering that you can manage from your own computer or phone, plus remote monitoring of screenshots and keylogging. $10/mo, with a 21 day free trial.